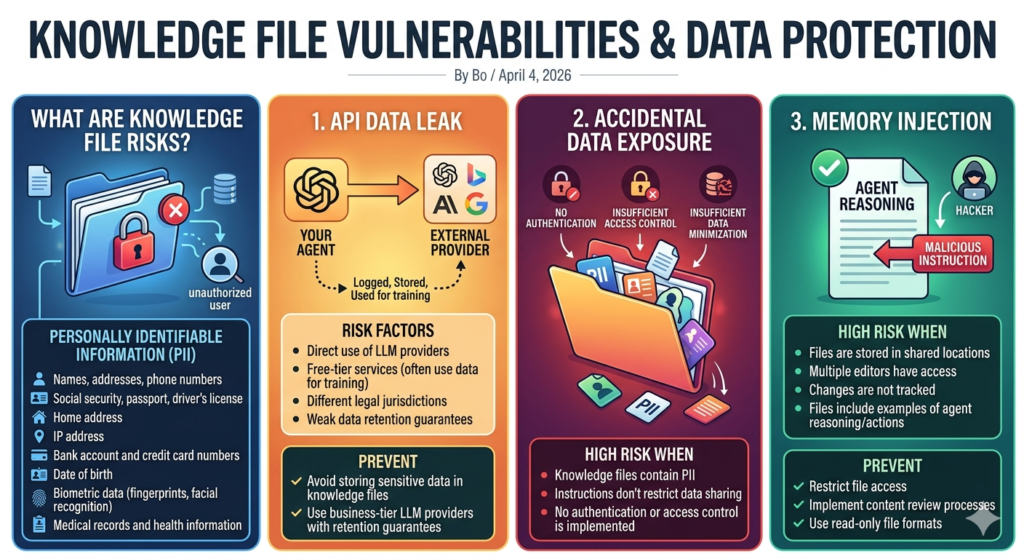

Knowledge File Vulnerabilities & Data Protection What Are Knowledge File Risks? These risks arise from sensitive information stored in files that unauthorized users may access. Personally Identifiable Information (PII) Sensitive data includes: Names, addresses, phone numbers Social security, passport, driver’s license Home address, IP address Bank account and credit card numbers Date of birth Biometric data (fingerprints, facial recognition) Medical records and health information 3 Types of Knowledge Leakage 1. API Data Leak Occurs when your agent sends knowledge file data to third-party providers. Examples of providers: OpenAI Anthropic Google Risk Factors: Direct use of LLM providers Free-tier services (often use data for training) Different legal jurisdictions Weak data retention guarantees Why It’s Risky All data passed to external providers is subject to their policies. It may be: Logged Stored Used for training Prevention: Avoid storing sensitive data in knowledge files Use business-tier LLM providers with retention guarantees 2. Accidental Data Exposure High Risk When: Knowledge files contain PII Instructions don’t restrict data sharing No authentication or access control is implemented 3. Memory Injection What It Is Attackers insert malicious instructions into knowledge files that the agent treats as legitimate. High Risk When: Files are stored in shared locations Multiple editors have access Changes are not tracked Files include examples of agent reasoning/actions Prevention: Restrict file access Implement content review processes Use read-only file formats