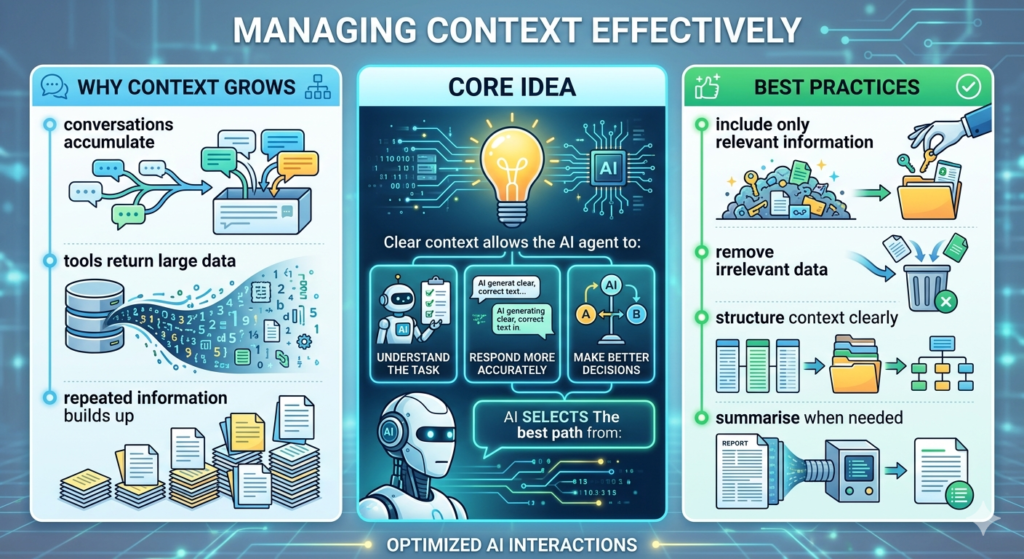

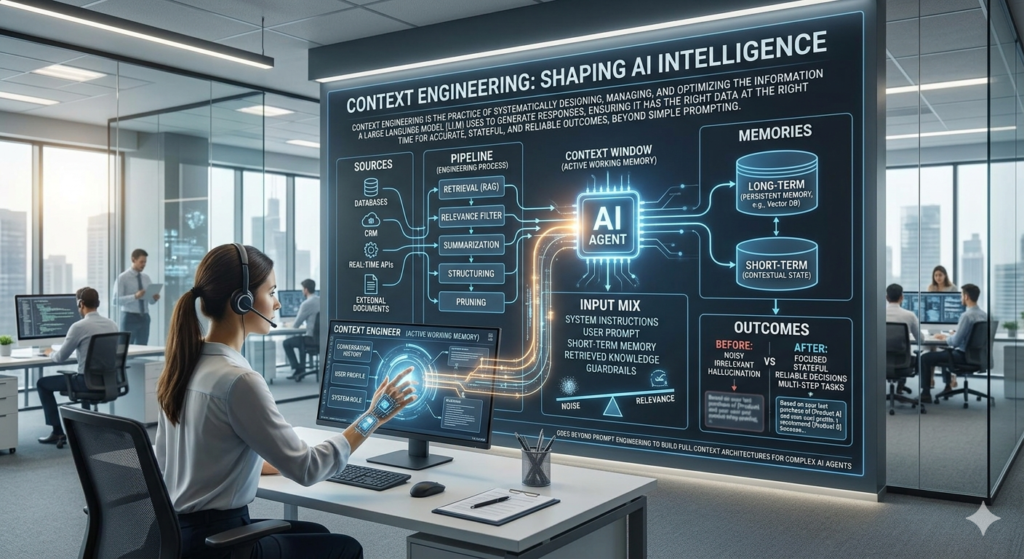

Context Windows, Tokens & Limits Context Window The context window is the maximum amount of information an AI model can process at once. What It Includes system prompt conversation history tool outputs tool definitions Why It’s Critical context accumulates automatically it consumes token budget overflow reduces performance Context Trade-offs Smaller Context more selective requires careful prompting Larger Context more flexibility but more complexity and noise Token Behaviour more context ≠ always better more tokens = higher cost diminishing returns after a point