Guardrails – The Foundation of Safe AI Systems

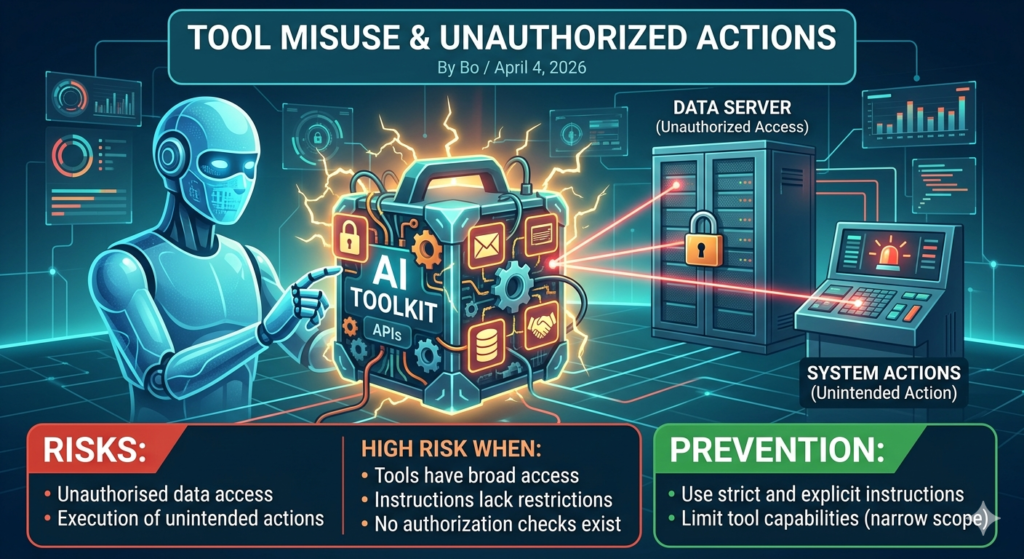

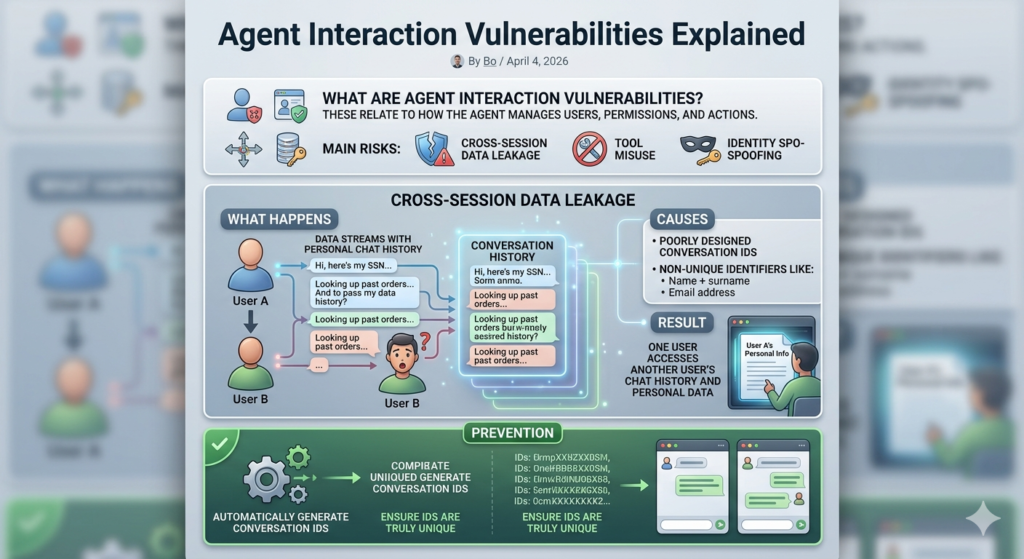

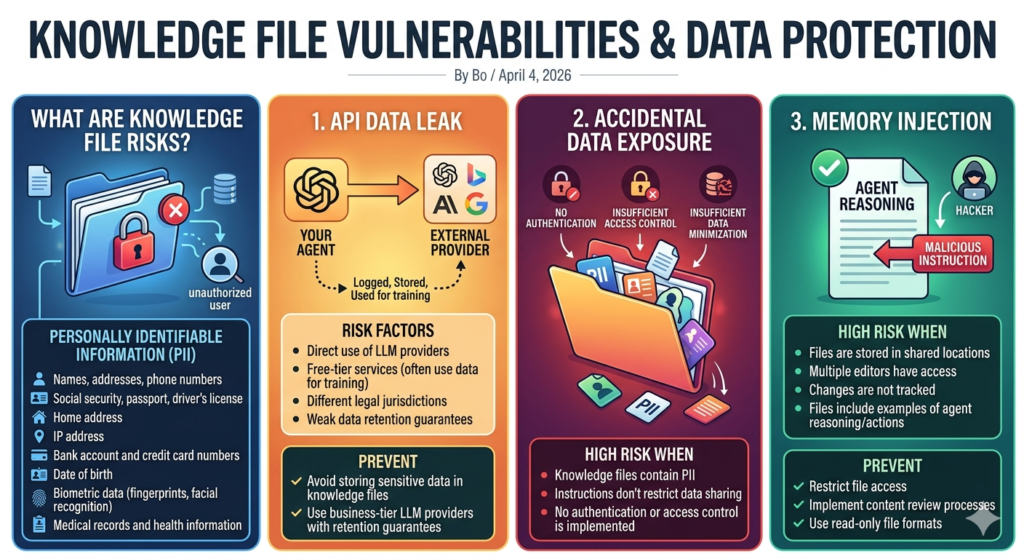

Guardrails – The Foundation of Safe AI Systems What Are Guardrails? Rules and constraints that prevent AI systems from operating outside intended boundaries. Core Guardrails: 1. Scope Limitation Only give access to tools when absolutely necessary 2. Authentication Restrictions Require identity verification before interaction 3. Data Access Boundaries Clearly define what each tool can access and do 4. Input Validation Ensure all inputs are safe and expected 5. Tool Usage Restrictions Design tools with narrow, specific purposes 6. Approval Workflows Require approvals for sensitive actions 7. Testing Continuously test the agent for vulnerabilities Final Thoughts AI agent security is not a single feature—it’s a system of layered protections across: Data Tools Identity Interactions The safest agents are designed with minimal exposure, strict controls, and continuous oversight.