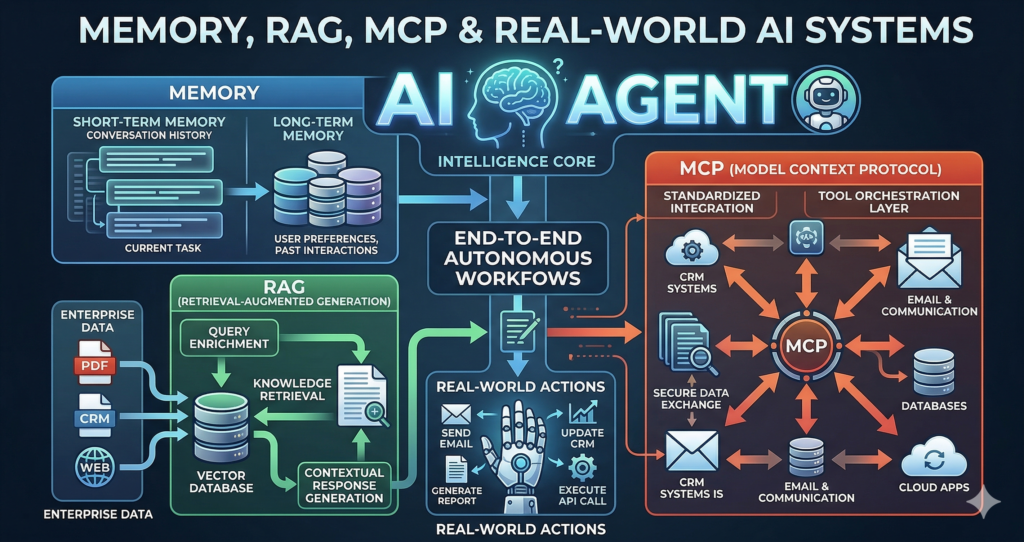

Memory, RAG, MCP & Real-World AI Systems

AI Memory Short-term Memory Long-term Memory Context Window Conversation ID RAG – Deep Dive Steps: Knowledge Files vs Tools Knowledge Files Tools Full context Targeted retrieval Static data Dynamic access Direct File Processing MCP Architecture Core Elements MCP Communication Uses: Security Real-World AI Agent Examples 1. Customer Invoice Agent 2. Meeting Assistant 3. Sales Assistant 4. Security Agent Why Agentic Automation? Final Thought AI is evolving from: Understanding LLMs and systems like MCP and RAG gives you the ability to: